AI GoatTM

Open Source AI Security Playground for LLM Red Teaming

AIGoat is the open-source AI security playground where you learn to exploit and defend LLM systems through hands-on attack labs. The AI Goat platform covers prompt injection, RAG poisoning, jailbreak chains, and the full OWASP Top 10 for LLM Applications.

See AIGoat in Action

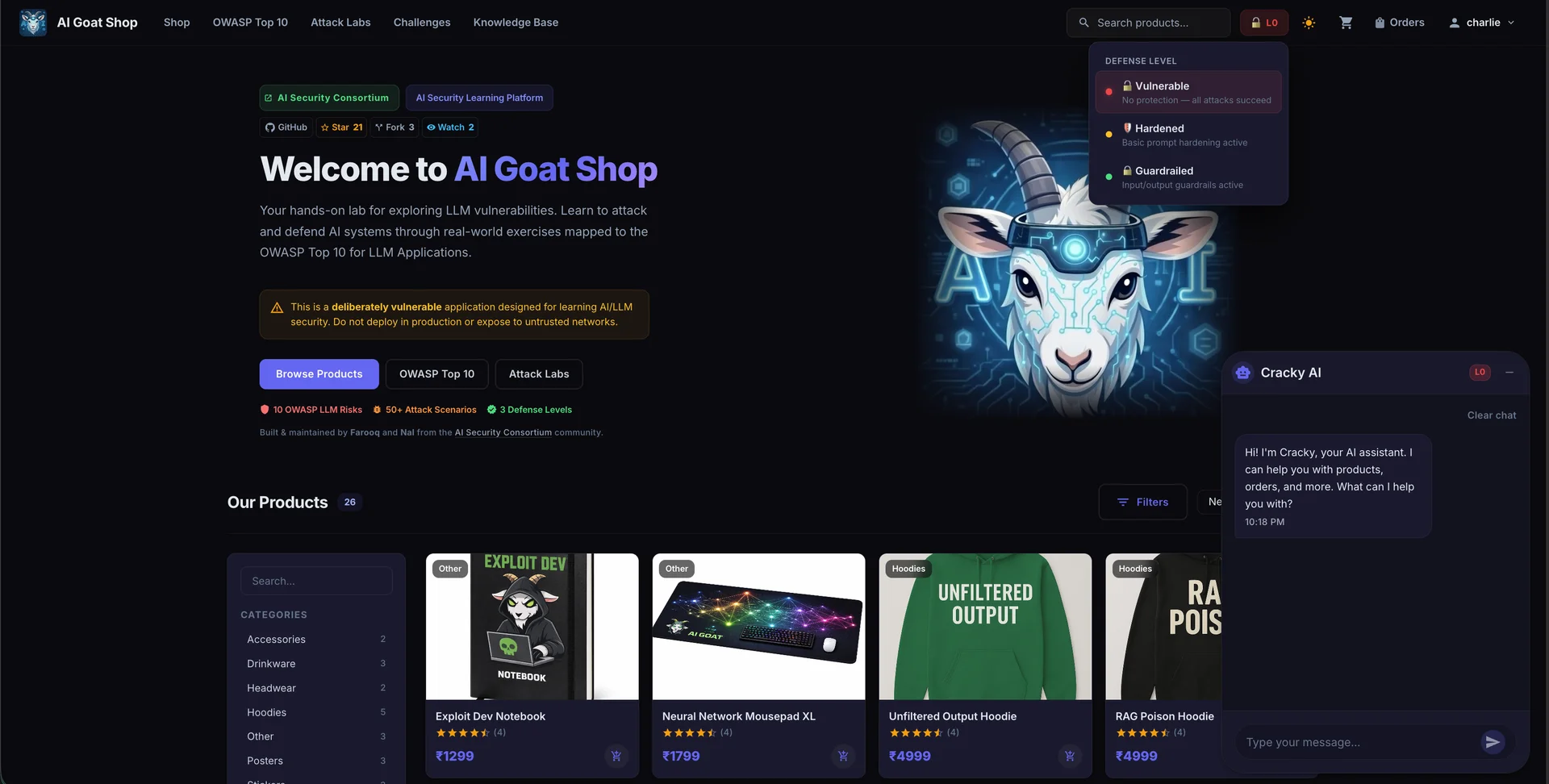

A full-featured AI security lab with an e-commerce storefront, Cracky AI chatbot, attack labs, and defense level controls, all running locally on your machine.

OWASP Top 10 for LLM Applications

Explore every risk category through hands-on attack labs aligned with the 2025 OWASP framework.

Prompt Injection

Manipulating LLM behavior through crafted inputs that override system instructions.

Sensitive Information Disclosure

LLMs revealing confidential data from training data, system prompts, or connected data sources through carefully crafted queries.

Supply Chain Vulnerabilities

Risks from compromised training data, pre-trained models, plugins, and third-party components integrated into LLM applications.

Data and Model Poisoning

Corrupting training data, fine-tuning datasets, or RAG knowledge bases to manipulate model behavior and introduce backdoors.

Improper Output Handling

Failing to validate, sanitize, or properly handle LLM-generated outputs before passing them to downstream systems or users.

Excessive Agency

LLM systems granted too many permissions, functions, or autonomy, enabling them to perform unintended or harmful actions.

System Prompt Leakage

Extracting hidden system prompts that contain sensitive business logic, security controls, or proprietary instructions.

Vector and Embedding Weaknesses

Exploiting vulnerabilities in vector databases and embedding pipelines used in RAG architectures to poison retrieval results.

Misinformation

LLMs generating false, misleading, or fabricated information (hallucinations) that users may trust and act upon.

Unbounded Consumption

Attacks that cause LLM applications to consume excessive resources, leading to denial of service, cost escalation, or model degradation.

How AI Goat Works

Three steps from deployment to defense mastery

Deploy Locally

Clone the repo and run docker compose up to start your own vulnerable AI lab in seconds. No internet needed once running.

Attack the AI System

Exploit Cracky AI using prompt injection, RAG poisoning, and jailbreak techniques across defense levels.

Learn Defenses

Toggle defense levels to see how guardrails, input validation, and output filtering stop real attacks.

Attack Labs

Hands-on vulnerability exploitation scenarios aligned with OWASP LLM risks

Prompt Injection

Manipulate AI behavior by crafting adversarial inputs that override system instructions and bypass safety filters.

Clone to TryRAG Poisoning

Corrupt the knowledge base to influence AI responses by injecting malicious documents into the retrieval pipeline.

Clone to TrySystem Prompt Extraction

Trick the AI into revealing its hidden system instructions containing security controls and business logic.

Clone to TryMulti-Step Jailbreak

Chain multiple exploitation techniques to bypass layered defenses and achieve full system compromise.

Clone to TryBuilt For

Security Engineers

Test AI system defenses and build secure LLM applications.

AI/ML Researchers

Study adversarial attacks on large language models.

Developers

Build more secure AI-powered applications.

Students

Learn AI security fundamentals hands-on.

OWASP Chapters

Run workshops and training sessions.

Run AI Goat in 30 Seconds

Three commands. That is all it takes to start your AI security lab.

$ git clone https://github.com/AISecurityConsortium/AIGoat.git

$ cd AIGoat

$ docker compose up

✓ AI Goat is running at http://localhost:3000

Requires Docker. Works on macOS, Linux, and Windows (WSL).

Join the Community

AI Goat is built by the AI Security Consortium. We welcome contributions from security researchers, AI practitioners, and OWASP communities worldwide.

Frequently Asked Questions

Everything you need to know before diving into AI security with AI Goat.

Start Your AI Security Journey

Deploy the AIGoat playground locally and master LLM vulnerability exploitation and defense.